Algorithms: the peace we lost, the truth we gained

How breaking echo chambers made us angrier but wiser

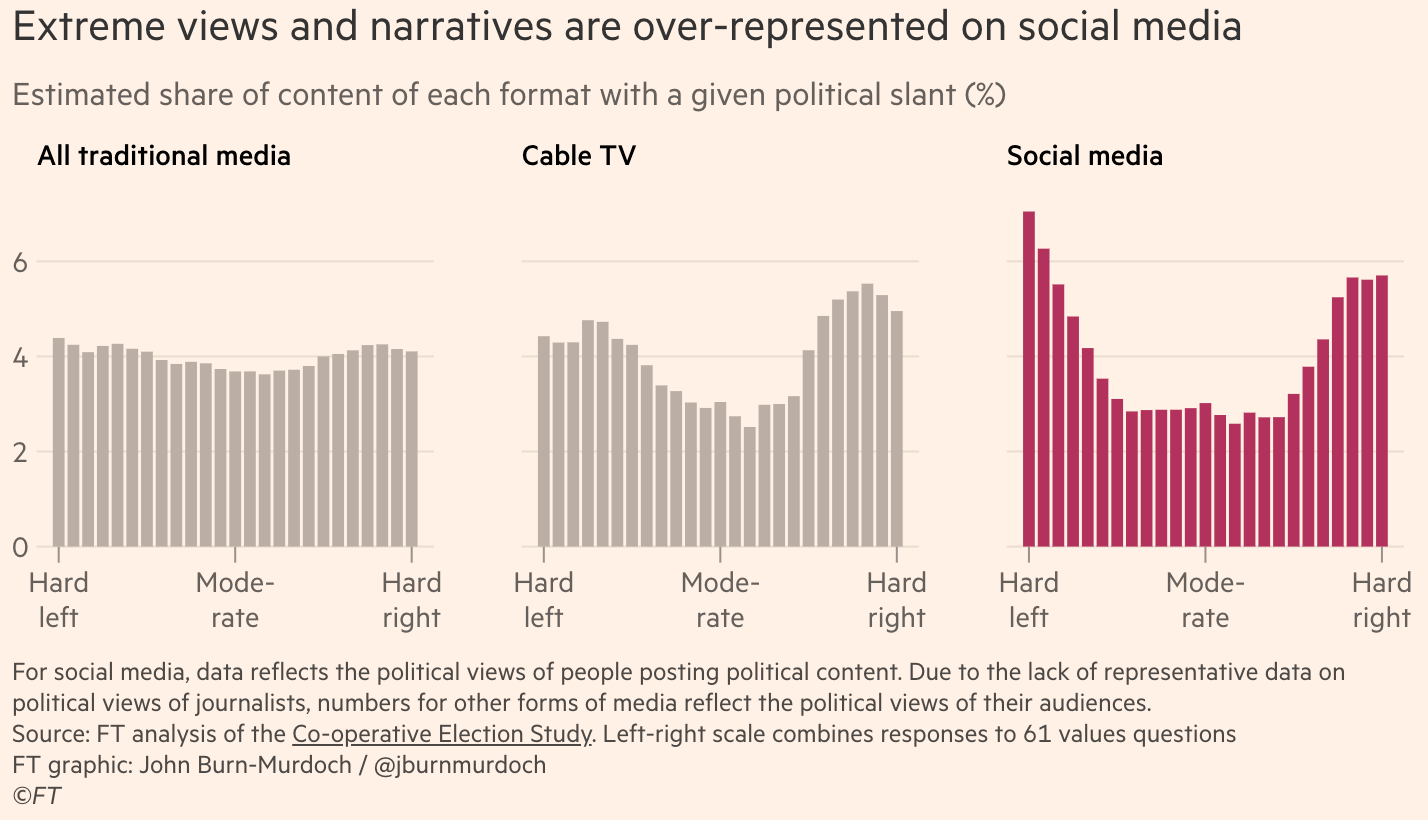

A common narrative you’ll find in politico-cultural discourse these days is that the internet and social media are contributing to political polarisation and extremism by trapping people in echo chambers. Social media algorithms highlight the most extreme voices on either side of the political aisle and only show people what they want to see, thus driving people further and further to the extremes. Technology created echo chambers; echo chambers created polarisation and extremism, so the theory goes. According to this narrative, algorithms and echo chambers go hand in hand—and both are evil.

To combat the evil of echo chambers, several new media companies have sought to address this problem head-on by forcibly bringing together different sides of politico-cultural debates. Podcasts like Triggernometry pride themselves on their centrism1, level-headedness, and willingness to engage both sides (they recently hosted Ted Cruz and then Mehdi Hasan to discuss the Iran war from opposing standpoints, for example). Jubilee Media goes one further by frequently hosting combative debates—most aggressively in the Surrounded format, whereby a single high-calibre debater is surrounded by 20 amateur disagreeing voices who take turns trying to take down their shared ideological enemy.2 Piers Morgan, the world’s premier example of boomer liberalism, runs a show called Piers Morgan Uncensored where he invites on proponents of opposing views, lets them shout at each other, and then proceeds to censor them by constantly interrupting them. Even the hallowed debating halls of the Oxford and Cambridge Union Societies, with their debates now filmed and uploaded to YouTube, can claim to contribute to the dismantlement of echo chambers. Perhaps the strongest claim to anti-echo chamberism can be made by Ground News, which aggregates news stories from outlets with transparent partisanship labels and puts them all in the same place so that people can identify and overcome media bias.

Sounds great. So perhaps we can be expecting our increasing political polarisation to reverse some time soon, given all of these corrective efforts, right? I’m doubtful.

Despite all of these anti-echo chamber efforts, I really don’t feel like polarisation is getting much better. In fact, it seems to be getting worse. Contrary to their stated intention, I think these echo-chamber-defying platforms are directly contributing to the increased polarisation, and it’s not because they are failing to break people out of their echo chambers—they undoubtedly succeed in that goal. Instead, I conjecture that echo chambers are, in fact, a healthier psychological environment for humans to exist in and probably act to temper political polarisation. But, as I will go on to argue, perhaps this isn’t the correct North Star to aim for.

Echo chambers require gatekeepers

Prior to social media and the internet, the diversity of news sources and opinions available to the public was much more limited, and it was more geographically constrained. This meant that people living in the same area were fed broadly similar information and were thus all in the same echo chamber3. While this made them susceptible to centrally controlled narratives, it also ensured a certain homogeneity of public opinion.

It wasn’t just news, though. All cultural products were disseminated by gatekeepers, rather than being algorithmically optimised for attention. People broadly knew and liked the same music, the same movies, the same ideas.

As Asa Park put it in his brilliant video essay, Why we were told to hate Nickelback:

This meant that on Monday mornings at work or at school, millions of people can talk about the same TV shows they’ve all watched Sunday night; the same songs they’ve all heard on their morning commute. You could say that pop culture was a shared language. Radio DJs decided what songs got played. Blockbuster decided which videos got stocked on their shelves. MTV decided which music videos got into rotation. Essentially, this was gatekeeping. And it sounds restrictive, but behind that gate was our culture. Our shared reference points. Our common ground.

In other words, we lived firmly in echo chambers.

At the level of the individual, this was a wonderful existence. The people around us were predictable and familiar. Small talk with a random stranger would be much more likely to reveal common interests. Encounters with other humans didn’t require a “fight or flight” cognitive response as default.

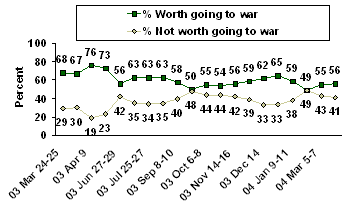

At the level of the society, though, the information ecosystem was constrained. Overton windows were narrow. Dogmata were firmly embedded. For example, the public opinion of the US invasion of Iraq in 2003 was largely positive. In hindsight, with more information, views on the Iraq invasion are now much less favourable. The key difference here is “with more information”. The point is that, in 2003, information was gate-kept, Americans lived in an echo chamber, and they held opinions that were more or less given to them on a plate, rather than them being empowered to decide for themselves based on all of the available information. There are many such cases of society being led away from the truth under a unified narrative due to gate-kept information (I’m sure the reader can think of a few examples even from the last five years alone).

And yet these unified narratives were beneficial at the psychological level. As the philosopher Joseph James Rogan Jr. recently recounted:

You know, I remember I've talked about this before, but post-9/11, everyone was so connected. Everyone was smiling. People were letting you get on the highway. They're letting you get in their lane. They're waving. Everyone had an American flag on their car. We've been attacked. We were united, you know. And it's just sad that it takes something like that for people to realize, like, this is a gift to be alive in this incredible country at this incredible time in history.

A silver lining to a tragedy, the shared sense of civic compassion Rogan speaks of was only possible due to the echo chambers that Americans lived in at the time. Had podcasters and social media been around at the time, the national narrative of the events of 9/11 would have been fractured and distorted. Otherwise fringe views would have seeped their way into the political conversation and Americans would have been much less likely to have been able to relate to each other on common ground.

Thus, at the level of the individual and from a psychological perspective, echo chambers were pleasant and beneficial, but at the level of a society and from the perspective of seeking the truth, echo chambers were destructive. Ignorance was bliss, and people were blissful. That’s no longer the case now, though.

Breaking down the gates: the algorithm

Now that we live in an era of free flowing information (in large part thanks to large independent social media platforms like X, TikTok, and Instagram/Facebook), information is no longer curated by gatekeepers, but distributed by algorithms.4

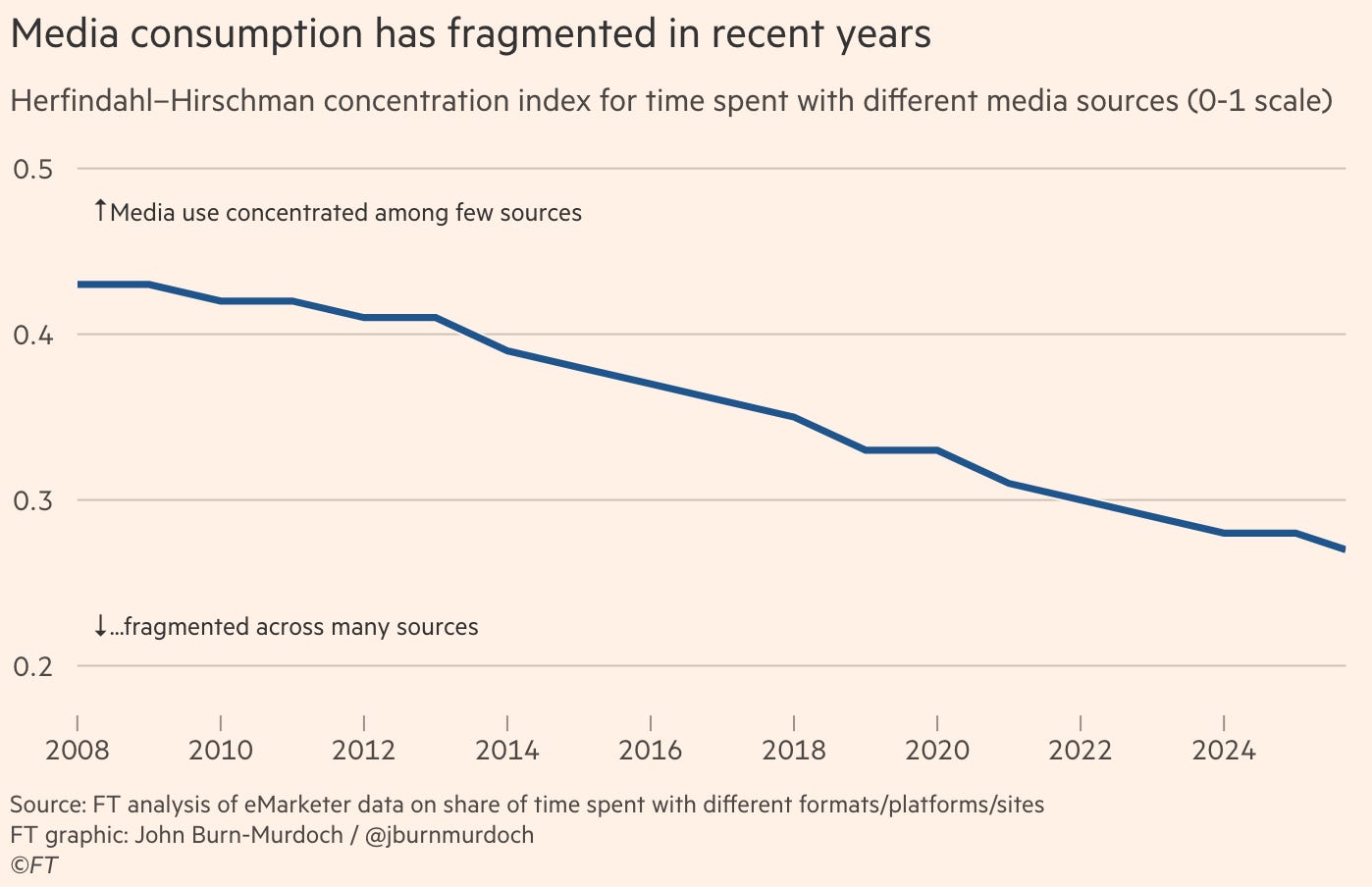

Our sources of information have diversified as algorithms have replaced gatekeepers, which is great for disabling censorship and allowing for free speech to take its course. In purely economic terms, decentralising the information market like this will always lead to a more efficient pursuit of the truth. Indeed, worries about algorithms exacerbating misinformation seem to be largely overblown and not supported by any objective data. The reality is that more people know more stuff in 2026 than they did in 2003. More often than not, misinformation alarmism merely reflects the reactive desperation of the gatekeepers trying to cling to their historic power.

While gatekeepers could engineer public opinion on war in the middle east twenty years ago, they are powerless to do so today. The diversified information network managed by algorithms allows for the public to get much more information—and the most interesting and important information inevitably rises to the top. Perhaps that is partly why public sentiment towards the recent and ongoing Iran war is so much less favourable than it was at an equivalent stage during the Iraq war. An algorithmically informed populace is a better informed, more skeptical populace.

Okay so ignoring any potentially negative psychological impacts on the individual, algorithms are good? A welcome change from the gate-kept echo chambers of the past? With an ideal algorithm—yes.

The only slight issue is that the invisible hand which would normally guide the attention economy is not entirely invisible. It is a deliberately constructed algorithm. Tweaking what the algorithm rewards and what it punishes will boost and suppress different kinds of social media posts to different degrees. Maybe information isn’t gate-kept now, but it’s still very much manipulated, squeezed, and pruned. The marketplace of ideas isn’t an entirely free market. The algorithms are imperfect.

Imperfect algorithms

These algorithms typically optimise for time spent on-app. Attention, that is. At a mechanistic level, this means that many of the social media algorithms reward replies to a post much more heavily than likes on a post (think how much more attention typing out a reply requires compared to simply clicking “like”—and how much more attention is garnered from other users by a post with a rich comment section). For X’s algorithm, which is largely open source, if the original poster replies to those replies, then that boost is enhanced even further. This mechanic is designed to foster deeper conversations and commentary.

Now, sometimes this feature of the algorithm works as intended. A post will be genuinely interesting and it sparks a conversation in the replies between the poster and various commenters. Perfect. But I’m sure you can easily see how, especially when posters are cognisant of the intricacies of the algorithm, this system might get gamed.

At the most innocent level, posters who want their post to do well can try to engineer replies/comments by prompting readers with questions (YouTubers absolutely love to end a video with a “do you agree?”, “let me know what you think in the comments down below”, etc). This is fairly organic and probably not that distorting of the information ecosystem.

More Machiavellian posters can’t merely rely on organic comments to boost their posts though. Instead, they’ll rely on an army of algo-cognisant commenters (either human or bot accounts) to spam comments once the post is up—thus boosting the post very quickly and strongly. Notably, there doesn’t need to be active coordination between the poster and the boosters—they can be entirely separate parties with separate ultimate goals but shared proximal goals. Intense boosting of Christian nationalist Nick Fuentes’ posts by accounts largely originating in India, Pakistan, Nigeria, Malaysia, and Indonesia is a case in point.

Perhaps the most pernicious strategy of all algorithm-gaming strategies is a technique known as rage baiting. Due to the conditions our minds evolved in, human psychology has a negativity bias which motivates us to take action (i.e., reply to a post) more greatly when motivated by negative emotions (frustration, hate, anger), than by positive ones (curiosity, kindness, creativity). Rage baiting involves posting deliberately false or frustratingly stupid content designed to grab people by the amygdala and coerce them to correct or berate the rage baiter, thus boosting the visibility of the post.5

Echo chambers create consensus; algorithms create polarisation

Algorithms shattered echo chambers and in so doing have decentralised and increased the quantity of information available. I consider this to be a good thing for getting to the truth—certainly preferable to being fed an incorrect consensus. But the stability of a democracy perhaps doesn’t depend on truth. Perhaps we can blame algorithms (or their creators, at least) for something. As John Burn-Murdoch put it:

For all the attention given to misinformation, a narrow focus on objective falsehoods distracts us from a much more fundamental shift. The emerging democratic risk is not so much that people believe false things — they always have. It is that they no longer believe the same things as one another, false or otherwise.

This is is the political version of what Asa Park was getting at.

But if people believe different things in the age of the algorithm, is that not just another way of saying that algorithms reinforce echo chambers? Are Triggernometry, Ground News, Piers Morgan et al. actually correct?

No. The polarised ecosystem of social media is well-documented, but people tend to misinterpret what’s actually going on.

When people see a graph like the one above, they imagine two groups of people: one shown only far left views and one shown only far right views. But the data points here are views/narratives in posts, not people. Social media users are much more likely to encounter views from both ends of the spectrum. Indeed, as someone on the right myself6, I encounter all manner of far left content on my feeds. Showing me that stuff is more likely to get a rise out of me. Perhaps I’ll comment or share it with a friend so we can ridicule it together. A right wing echo chamber simply wouldn’t grab my attention in the same way—I’d just like, scroll past, and then log off.

In reality, people believe different things not because they are exposed to too little information from the other side, but because they are shown too much information from the other side. Algorithms create polarisation by showing you stuff outside of your would-be echo chamber.

Contra Ground News

A few years ago I believed in the assertion that our psycho-political woes were, at their root, echo chamber-induced anxiety. I came across this new media organisation that promised to find the middle ground on news stories. They present headlines on the same issue from diverse sources to show how media bias shapes opinion. They appeal to well-meaning people from across the political spectrum. They even have a “blindspot” feature, which shows you stories that had been missed by sources that lean in favour of your own bias (we’ve all heard the podcast ad reads …). What a brilliant initiative, I thought. I decided to follow Ground News on Instagram.

Their actual product seems to be really good. The problem is combining their mission with social media algorithms: ~90% of the time I see their posts, I come away enraged. The news item will typically already be known to me (aggregating and then disseminating news is obviously slower than getting it straight from the horse’s mouth). What enrages me is usually the comments. Opinions from ignorant idiots who I don’t know and don’t care about. They’re so ill informed! They draw all the wrong conclusions! What total morons!

I’ll spend more time scrolling through the comments, getting angrier as I do. Sometimes I’ll reply with a long response to a nameless faceless comment that I disagree with. Sometimes it’s an extended back and forth of asinine bickering. Sometimes I’ll catch myself and delete my comment before posting it. Invariably, the net result is that I come away emotionally worse off and with a dimmer view of my fellow humans. Of course, many of the likes and comments I see are probably not human-generated at all.

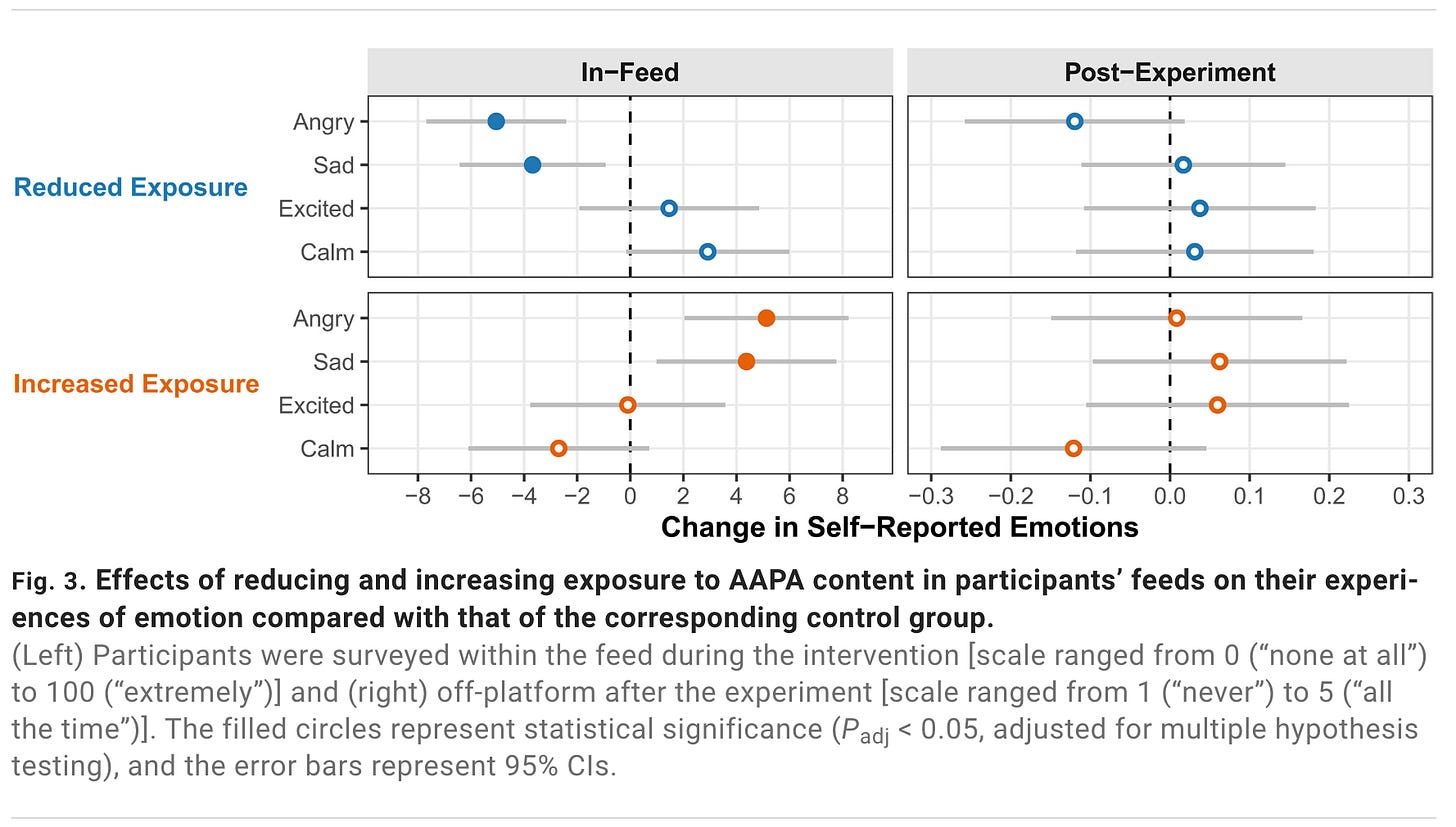

Beyond personal anecdote, it seems my emotional response here is validated by studies, including a 2025 interventional randomised study that used an LLM-based browser extension to re-rank participants algorithmic feeds in real time. Users were randomised to see an increase or decrease in the prevalence of “antidemocratic attitudes and partisan animosity” (AAPA) content. Corroborating my own experience, users who saw more stuff outside their echo chambers were acutely more angry and sad.

I now realise that the problem isn’t that I’m not fighting hard enough in the comments. It’s not that these people, who hold views I find to be bad and wrong, exist. The problem is that I’m not really supposed to ever encounter such people. Human psychology is not evolved to thrive outside of an echo chamber. We’re wired to resolve political conflicts—by brain or by braun—and then form new, stable echo chambers.

It is this conflict-resolution drive that the likes of Jubilee, Piers Morgan Uncensored, Ground News, etc., capitalise on. Modern day intellectual Roman colossea are a good business model, it seems. Just don’t be fooled that these platforms ever want there to be a resolution to the conflicts. The highs and lows of cognitive tribal warfare are the point, and us consumers should at least be aware of this if we are to continue attending the circus.

And yet, such conflict is essential for the truth to emerge.

If we want to continue to have the most information we can have and understand the world around us as deeply as possible, we must exist in a state of constant exposure to people and things that spike cortisol. We cannot return to the echo chamber. We cannot give up the unrivalled access to information that we, in 2026, have, nor our freedom to decide what to believe in. Humanity’s progress towards the good and the true depends on us being cursed with knowledge—fractured, diverse, and competing knowledge.

In the centre of the garden of Eden, there was a tree which bore fruit that, if eaten, conferred moral awareness—a knowledge of what is good and true and what is bad and wrong. Man contravened its better sense and chose to consume the fruit.

The replacement of gate-kept echo chambers with algorithmically distributed information networks offers Man the same choice once again. Perhaps going down this path represents a second Fall. Perhaps doing so is the inescapable fate of humanity. Ultimately I believe all of us are free to make the choice ourselves. In the same breath that we criticise the algorithms, we must also praise them for what they have given us. Choose: embattled gnosis or controlled, ignorant bliss.

Co-host Konstantin Kisin calls himself “politically non-binary”, but I think this is mainly because he is (still) embarrassed by the epithet “conservative”.

For example, they recently teed up vegan philosopher Jack Symes against a circle of mostly-midwit meat-eaters. I’ll be writing up my rebuttal of his case for veganism in the near future.

The definition of “area” varied by type of information. Could be country, city, school, campus, etc.

I’m going to carry on referring to “algorithms” and “the algorithm” interchangeably. Obviously the specific algorithms are constantly being tweaked over time and are different between platforms too. They all broadly converge on the same mechanisms for attention harvesting though.

By the way, I’m pretty sure the Substack algorithm does not reward rage-baiting as handsomely as other algorithms do. Comments on essays seem to be a much lower boost signal than likes and restacks. I think this contributes to the great information ecosystem here.

I’d consider myself to be a technoprogressive anarcho-capitalist with morally conservative foundations. But these categories are all pretty arbitrary, meaningless, and contradictory anyways. In general I find the habit of categorising people’s views to be lazy and incurious, and it degrades precision and accuracy.